It seemed I started getting emails from people almost as soon as the virtual ink was dry on the latest delivery spec to come from Netflix. People were concerned about the language and the impression that Netflix had turned the clock back 10 years by using Dolby’s Dialog Intelligence software. In this article, we investigate to see if Netflix’s new delivery spec is a retrograde move, a pragmatic response to the changing climate, or a stroke of genius.

The Areas Of Confusion

One of the biggest areas of confusion hinges around the word 'gating'. The Netflix spec states...

Average loudness must be -27 LKFS +/- 2 LU dialog-gated. Peaks must not exceed -2db True Peak. Audio should be measured over full program according to ITU-R BS.1770-1 guidelines.

Someone commented...

I've been working in a top London facility this week and everyone there thinks it's madness to revert to -27. Opinion about dialnorm and LRA is divided though, but the acceptable LRA is mad. 4-20? People are going to wear out their volume buttons....

The confusion started because BS 1770-1 does not use the Relative Gate as specified in BS 1770-3 and included in the latest versions of the main loudness delivery specs EBU R128 and ATSC A/85. A user asked on a forum...

Why does this feel like a mistake? It says according to ITU-R BS.1770-1 and not 1770-3.

Someone else asked...

So wait, does that mean there are now two loudness standards on their service?

How could Netflix be using gating and yet specifying BS 1770-1, which does not use gating except for ‘silence’? It became apparent that the keywords in this spec are ‘dialog-gated’. Someone asked...

What does "dialogue-gated" mean in this particular case?

This appears to be Netflix picking up the old Dolby Dialog Intelligence algorithm, which was a precursor to the current BS 1770 based loudness workflows and was a proprietary piece of code built into the Dolby LM100 hardware and the Dolby Digital Meter software, which Dolby has recently discontinued.

Then we get to the number 27. -27 LKFS seems to be reinforcing the return to the Dolby Dialnorm workflows as -27 Leq A Weighted was a target for Dialnorm. Someone asked...

So it’s 3dB less than the TV standard (-24LKFS +/- 2dB)? Am I understanding this correctly or am I missing something?

In the new Netflix spec they quote -27 LKFS which could be interpreted as an attempt to mix the Dolby Dialnorm spec with BS 1770 spec. Someone commented on a forum...

I’ve heard complaints about a dozen times in the last 30 days from non-mixers complaining about trying to watch films that are too dynamic. People don’t watch movies at 80dB in their homes, and -27 only worsens things in my opinion.

All of this didn’t seem to make sense to me or anyone I talked to. Surely the people at Netflix couldn’t be wanting to turn the clock back 10 years and to pick up an obsolete model for measuring loudness. There must be sensible reasons for doing this, so I decided to investigate and see what I could find out.

Where Netflix Could Be Coming From

It’s generally accepted BS 1770 is working pretty well for broadcast content. The same may not be true in a world of different delivery platforms for both traditional broadcasters using conventional terrestrial transmission systems as well as online systems like the BBC iPlayer. Check out our article Loudness 4 Years On. Has Loudness Compliance Worked Or Caused More Problems Than It Has Solved?

Now we have got the new kids on the block like Netflix, Hulu, Apple and Amazon Prime who don’t have terrestrial or satellite transmission systems, they are a purely online delivery model.

It could be argued that a lot of their content sits between the cinema theatre delivery model and the broadcast delivery model. A lot of the content that Netflix and others deliver are films that were created for the cinema and then there is the original commissioned content from the likes of Netflix that seems to be created using the cinema production model rather than the broadcast production model.

In the debates about cinema loudness and dialog intelligibility, it is generally accepted that it isn’t appropriate simply to apply the broadcast BS 1770 standard to the cinema delivery model, there are other issues at play. Check out our articles Loudness And Dynamics In Cinema Sound - Part 1 and part 2.

The cinema theatre delivery model, in theory, has end-to-end control. It has been designed to be a calibrated system, with standardised playback systems, quiet rooms to consume the content, big spaces, which will take a large dynamic range soundtrack.

In the cinema delivery world, they are now discussing these issues, triggered by user complaints, and changes in legislation in some countries. For example, I attended a session at last year’s AES Conference in New York in which the panel outlined the issues and challenges. Interestingly, from what I understand talking to various people, they are looking at a number of options including a variant of BS 1770 with LRA guidelines as well as some kind of dialog level based spec.

The problem Netflix has, as I see it, is that it considers itself closer to the cinema delivery model than the broadcast delivery model.

Back to the Netflix delivery spec and the dialog-gated issue.

It seems very strange for Netflix, or any other ‘broadcaster’ to be adopting a proprietary measurement system, in the Dialog Intelligence algorithm, which is only available in obsolete hardware and software. Most standards are deliberately based on open code and protocols. Well, it turns out that Dolby has now made the Dialog Intelligence code available for free. Nugen Audio spotted this and they chose to add it as an option with 2 presets into their VisLM loudness metering software.

This is also why in the Netflix Audio Mix Specifications & Best Practices v1.0 Netflix state...

For best results, measure with a dialog-gated meter and aim for average dialog levels between -25 LKFS to -29 LKFS with -27 LKFS as a target. We suggest Dolby Media Meter or NUGEN Vis-LM, which should be set to 1770-1 dialog-gated.

So why would Netflix be adopting an outdated measurement protocol?

Maybe It Isn’t Such A Crazy Idea?

In my article Loudness and Dialog Intelligibility in TV Mixes - Are TV Mixes Becoming To Cinematic? we learn that a number of broadcasters have added LRA recommendations to their delivery specifications.

The Digital Production Partnership (DPP) recently updated their unified UK delivery specs for all UK broadcasters and added this Guidance On Loudness Range…

Loudness Range - This describes the perceptual dynamic range measured over the duration of the programme - Programmes should aim for an LRA of no more than 18LU

Loudness Range of Dialogue - Dialogue must be acquired and mixed so that it is clear and easy to understand - Speech content in factual programmes should aim for an LRA of no more than 6LU. A minimum separation of 4LU between dialogue and background is recommended.

In Canada, the CBC and Radio Canada both now require that the LRA be less than 10 and some request 8. Also, the Integrated loudness for the complete program AND the integrated loudness of the dialogue stem must BOTH be -24 LUFS. Lastly, the momentary loudness must not exceed +10LU above the target loudness. So with a -24 LUFS target there, your momentary must always remain below -14 LUFS.

In my conclusion I suggested...

I completely agree with the Canadians in specifying the loudness for the dialog stem, it is almost as if they are resurrecting the old Dolby Dialnorm. Dialog is key and I always prefer to set the dialog to be around target loudness in my loudness planning and then build everything around it.

We need a way to measure the dialog level. We could just take the centre channel, but that is only possible on 5.1 systems. Also, dialog can be in other channels of a 5.1 mix although I am not a fan of this as divergence can result in comb filtering especially when it comes the downmix and have a negative impact on intelligibility.

What we need is an algorithm that can analyse a mixed track and extract the dialog. Then use that to measure the dialog component of the mix.

This would need a new piece of code to be developed and then approved by the standards authorities which could take years or we could use ‘one we made earlier’ in the Dolby Intelligence algorithm, which is now freely available for any brand to use in their loudness metering products.

It turns out from conversations with developers that although it is relatively old code is still remarkably fit for purpose. There is no doubt that a new algorithm could be developed using all the research and work that has been done by the likes of iZotope with tools like Dialog Isolate but as I have said, all of this would take time and then would need to approved and made available to everyone, which would be a licensing challenge, to say the least.

What we have with the Dolby algorithm is a ‘fit-for-purpose’ solution, with a proven track record, that Dolby has now opened up for anyone to use without charge. This means we have a solution that enables us to measure both the loudness of the dialog in a mix as well as the possibility to measure the LRA so that the dynamic range of the dialog can also be specified.

Changes To LRA

Staying with LRA - reading the Netflix Audio Mix Specifications & Best Practices v1.0 they provide LRA recommendations…

The following loudness range (LRA) values will play best on the service:

5.1 program LRA between 4 and 20 LU

2.0 program LRA between 4 and 18 LU

Dialog LRA of 7 LU or less

Difference between FX content and Dialog of 4 LU

This includes a Dialog LRA. It is not clear exactly how this can be measured yet as BS 1770 doesn’t have a Dialog LRA as until now it hasn’t been possible to do. You could put a BS 1770 based loudness meter across the dialog stem in the mix, or for complete mixes, you could put a loudness meter on the output of the Dolby Dialog Intelligence algorithm.

Whilst we are on these Netflix recommendations and LRA, it is my view that an LRA of 18 to 20 is way too large as an acceptable maximum LRA.

Look at the Canadian recommendations, they require that the LRA be less than 10 and some request 8. The DDP delivery spec here in the UK says that programmes should aim for an LRA of no more than 18LU (which I think is still too high for domestic consumption), that the dialog LRA should be no more than 6LU in speech programmes and a minimum separation of 4LU between dialogue and background.

Do these not look remarkably similar to the new Netflix delivery spec? My only concern is the overall LRA of up to 20.

Consumers Are Still Reaching For The Remote Control

It is my view and experience, even with a decent quality speaker system in a relatively quiet living room, that a mix with an LRA of 18 to 20 is far too much, we find ourselves reaching for the TV remote to adjust the volume during a program, and that for me is a fail.

Remember that one of the key reasons for introducing loudness normalisation workflows was to stop consumers from having to reach for the remote control during a program, across program junctions or when switching from one channel to the next. So if a mix has an LRA that is too large like The Grand Tour or Blue Planet as I outlined in my article Are TV Mixes Becoming Too Cinematic? so that consumers have to adjust the volume, then we need to do something about it, which is what broadcasters like Canadians and the British are doing with their revisions.

The other issue I discovered when I studied The Grand Tour and Planet Earth 2 was that the dialog loudness had been pushed down because the integrated loudness had been skewed by the use of extended sequences of loud dramatic music and effects. We are lucky that we have a living room with a relatively low ambient noise level. A lot of domestic environments will be much noisier than ours.

All of which makes me question the underlying validity of offering content with a high dynamic range as permitted by Netflix and others. Our experience as a family is that it is these very networks, where more dynamic mixes are permitted, these are the very networks that we have to turn on the subtitles for, to save us from constantly turning the volume up so we can hear and understand the dialog and then turning the volume down when we get to the loud dramatic music and effects.

But if the mixes did not have extended loud sections then surely the dialog would not be pushed down as far and the LRA would not be as large?

So Why Does Netflix Have Such A Large LRA?

I can see why Netflix might want a delivery spec with a wider dynamic range because a lot of their content was made for the big screen rather than the small screen and it seems they have been transferring the same production values to the content they commision for the small screen. The ethos that they can transfer the content from the big screen to the small screen in my view is flawed. Commenting on my recent article Loudness and Dialog Intelligibility in TV Mixes - What Can We Do About TV Mixes That Are Too Cinematic? Reid Caulfield referring to the new Netflix specs says...

Mixes meant for the "At-Home" environment MUST be mixed - or remixed, if it was originally done in a large theatre - in a near-field environment at 79dB. NOT a large theatre at 85 because someone needed to fit 40 people in the room. And, it cannot be mixed in that large environment simply with the large speaker arrays turned off and the near fields turned on. It needs to be mixed in a much smaller TV-oriented room.

He then suggests how this could be policed...

By specifying all elements be delivered in a Dolby Atmos-At-Home "wrapper." Even if the show has not been mixed as an Atmos presentation, by specifying delivery as an ADM file, they guarantee that the source room's size data and speaker layout is included in the associated metadata that travels with the data file and program content.

I couldn't agree more about the need to remix cinema content or mixing content commisioned for consumption "At-Home" in smaller spaces at a more appropriate monitor level, like 79. I like his idea of using the Dolby Atmos-At-Home Wrapper as it will include the metadata of the room it was mixed in.

But until the powers-that-be take up Reid's suggestion what can we do about mixes that have an excessive LRA for domestic consumption?

Let’s Do Some Tests

In the latest Netflix delivery specs, the Dialog Target loudness with the Dolby Dialog Intelligence Gating at -27 LKFS seems to be on the low side to me. When I am mixing to R128 I aim to get my dialog to be close to but just under the target loudness of -23 LUFS (remember LUFS and LKFS are the same) which makes the Netflix dialog gated target of -27 LKFS, 4 LU lower, but in reality does that make the dialog lower or is it similar to the dialog in a conventional BS 1770 mix?

To find out I tested some examples I have referred to in previous articles when I looked at The Grand Tour and Blue Planet to see how they fare using the Dialog Gated settings as well as 2 examples of broadcast documentaries I have mixed.

Cow Dust Time was a documentary I mixed for BBC Radio 3. This is the public service classical music channel here in the UK and the house style permits a wider dynamic range and also it was for the strand Between The Ears, which is a strand where the brief positively encourages soundscapes and more sound design, than most radio documentaries.

Doctor’s Dementia was a more conventional documentary for BBC Radio 4, the public service speech channel here in the UK.

Click on the images to a larger version...

Planet Earth 2

The Grand Tour

Cow Dust Time

Doctor's Dementia

| Program Title | Integrated Loudness LUFS |

Dialog Loudness LKFS |

Notes |

|---|---|---|---|

| Planet Earth 2 | -23.0 | -26.1 | 0 LU = -23 LUFS |

| The Grand Tour | -23.0 | -26.3 | 0 LU = -23 LUFS |

| Cow Dust Time | -23.0 | -22.8 | Results normalised to give a 0 LU integrated loudness. 0 LU = -23 LUFS |

| Doctor's Dementia | -23.0 | -23.8 | Results normalised to give a 0 LU integrated loudness. 0 LU = -23 LUFS |

What is interesting is that for both The Grand Tour and Planet Earth 2 the Dialog Intelligence measurement correctly reflects the lower level dialog that I picked up in my earlier article Are TV Mixes Becoming Too Cinematic? and produces a normalised dialog-gated loudness of -26.1 LKFS for Planet Earth 2 and -26.3 LKFS for The Grand Tour Ep 2, compared to the R128 full mix measurements of 0 LU (-23 LUFS). Looking at my two speech dominated documentaries the Dialog Gated measurement for Cow Dust Time and Doctor’s Dementia were much closer to the R128 full mix measurement of 0 LU (-23 LUFS).

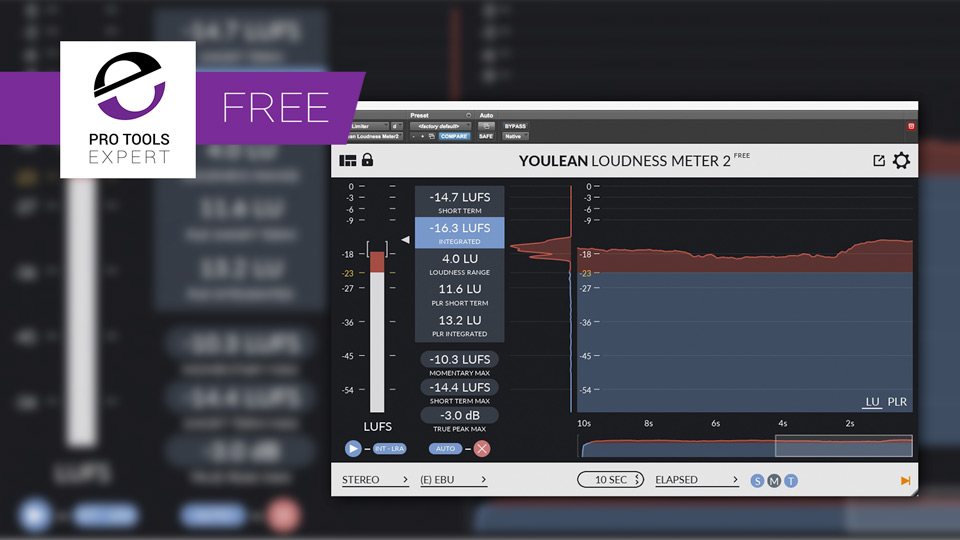

It would appear that from these initial experiments that the dialog-gated measurement is able to ‘extract’ the dialog level of a mix very successfully. However, currently, the dialog-gated preset on Nugen Audio’s VisLM2 doesn’t permit an LRA measurement.

I am hoping that in a future update Nugen Audio will offer a dialog-gated LRA measurement to support the ‘Dialog LRA of 7 LU or less’ element of the new Netflix spec, otherwise it won’t be so easy to measure the dialog LRA unless we put an instance of VisLM 2 on the dialog stem in a conventional BS 1770 mode.

As I have already mentioned, one of the challenges with the BS 1770 based delivery specs is that if you have a programme with more dynamic range when the integrated loudness is calculated, it will tend to mean that the dialog loudness in a dynamic mix will be lower than say a documentary where the dialog will end up much closer the target loudness. What this means is that the dialog level will vary from one programme to the next, which can be unsatisfactory for the consumers. What the Netflix spec will mean is that the dialog, typically the anchor content in a mix will be much more consistent from program to program, albeit quieter than I would like. Dialog Anchor levels were the very issue Dialnorm was designed to fix and something we have lost moving to the BS 1770 loudness workflows.

Netflix is wanting to implement a dialog anchor into all their content and there is a good argument for this. Check out the views of people like Rob Ashard in our user’s look at loudness workflows 4 years on.

My biggest issue with loudness, and this opinion hasn’t changed in four years, is that there should be a higher allowance for light entertainment shows. Think of your -23LUFS as a bank of energy for you to use. The energy of audience reaction uses up that allowance, and if you want to retain the correct balance between presenters talking, and 600 audience going nuts, the latter have to be louder, otherwise, it just sounds held back. This means that your chat levels, in absolute terms, have to be quieter than they are for a non-audience show. That’s around 3-4dB quieter on a PPM.

The result of this is that if, say, Graham Norton follows a David Attenborough documentary, Graham's dialog level delivering to a big audience with performance energy will be quieter than the soothing, whispering tones of David Attenborough's commentary. That’s just wrong. Bonkers.

What If We Reduced The LRA? What Would Happen To The Dialog Level?

Unfortunately, I am not in a position to arrange for programmes like Planet Earth 2 and The Grand tour to be remixed. What I can do is to run the mixes through a plug-in like LM-Correct 2 from Nugen Audio, which is designed to repurpose content for different platforms. What I tried was to reprocess both programmes down to an LRA of 10 and then again for an LRA of 8 and see how that affected the dialog level using the Dialog Detection option in VisLM 2 from Nugen Audio and here are the results…

| Program Title | Dialog Loudness LKFS |

Program LRA |

|---|---|---|

| Planet Earth 2 | -26.1 | 16.5 |

| -23.5 | 9.5 | |

| -23.0 | 7.6 | |

| The Grand Tour | -26.3 | 12 |

| -25.3 | 9.5 | |

| -24.7 | 7.6 |

As you can see in both cases reducing the LRA of the mix brings the dialog level up. Planet Earth 2 started from a much larger LRA, more akin to a Netflix kind of mix, and by bringing the LRA down from 16.5 to 9.5 the dialog level came up from -26.1 to -23.5 making it a much more pleasant listen and one for with we won’t need to reach for the remote control.

Conclusion

At a first look, it seemed that the new Netflix delivery specs came across as ill-informed and a 10-year step back in time. However on reflection and having studied the detail of the new Netflix delivery specs and their best practices suggestions there is a lot of merit in what they are trying to do and especially when you read the best practices document, they are trying to implement a spec and recommendations which pick up from my conclusions in my recent article Loudness and Dialog Intelligibility in TV Mixes - Are TV Mixes Becoming To Cinematic?.

They are to be commended for introducing a dialog LRA as that should go a long way to improve dialog intelligibility as will recommended difference between dialog and effects.

However, the Netflix spec has several issues for me. What works in a large space like a cinema theatre where the sound has somewhere to go and the space is relatively quiet is fine and works well as long as it isn’t overdone. However, this style will not work in smaller rooms. We have to mix content destined for domestic spaces in a space of a similar size. The specs need to require that not only are mixes are done at a quieter level, they are done in a space of appropriate size and proportions. I believe that Netflix is misguided to try and put together a spec that adopts a cinema model and impose it on a domestic setting. For me, the problems are with their chosen dialog level and LRA recommendations

In my view -27 for dialog levels is too quiet, or to put it another way the loudness changes from the dialog level to the loudest content in the show is too great.

I think dialog should be no more than 1LU below target loudness for the full mix. This would prohibit long periods of loud content that would skew the integrated loudness and so push down the dialog level. We need to look at techniques that will give us energy and impact but not long-term loud sequences.

This would also have a positive impact on the LRA and would reduce the unpleasant loud sections which trigger users reaching for the remote control mid-program.

My tests reducing the LRA with LM-Correct show that a smaller LRA will bring up the dialog level to a more appropriate level. If Netflix commissioned content that was mixed for an LRA max of 10 and a dialog target of -24LKFS then that would resolve most if not all the problems highlighted in this article. Even better they should consider Reid's suggestion of using the Dolby Atmos-At-Home wrapper which will contain the metadata of the space the program was mixed in. For pre-existing content, they could be processed using LM-Correct or probably better still the batch processing software AMB from Nugen Audio with their DynApt extension. That way content distributors like Netflix could relatively easily repurpose their back catalogue to a more suitable LRA and dialog level for domestic consumption.

One final shortcoming I have discovered in my tests is that although the Dolby Dialog Intelligence is remarkably good at extracting the level of dialog from a complex mix, it doesn’t give us a measure of intelligibility. Instead, we see recommendations like FXs need to be at least 4LU lower than the dialog. What if we had a dialog intelligibility meter that could give us a measurement of perceived intelligibility in a similar way to the BS 1770 loudness meter giving us a measurement of perceived loudness?

Netflix Response To Our Detailed Article On Their New Loudness Delivery Specifications

Scott Kramer who is Manager, Sound Technology | Creative Technologies & Infrastructure at Netflix reached out to us to ask if he could respond to this article.We always welcome responses to articles we produce and so welcomed Scott’s request to share this thoughts on how this new loudness spec came about.

Just to provide a little context about where Scott is coming from, he is an Engineer, Re-Recording Mixer and Mix Technician with a history of working on high profile content. He is skilled in Audio Engineering for Production and Post Production, Pro Tools, Audio Encoding, Feature Films and Sound Design.

Early on on his career he was a Mix Technician at Todd-AO, before becoming Supervising Sound Editor, Re-Recording Mixer at Wildfire Studios LLC for 7 years. Then he was Supervising Sound Editor / Re-Recording Mixer at Technicolor as well as working freelance as a Supervising Sound Editor / Re-Recording Mixer for 10 years before joining Netflix last year.

Over to you Scott…

In writing our specifications, we seek to preserve the best possible experience for Netflix members, while protecting the creative freedom of the creators. In the case of the new loudness specification, both groups were aligned. Many Re-Recording Mixers asked for dialog based measurement to simplify their workflow and to ensure loudness consistency for the audience.

We found that under the previous measurement method, a title could pass the spec with subaudible dialog on many devices, so long as it contained loud FX and Music content to balance it out. This is the downside of the relative-level gate in 1770-4 (-2/-3): it tends to over-estimate the loudness of wide-dynamic range content. We believe that dialog is the anchor element around which FX and Music are mixed. Just as the viewer sets their level based on dialog, mixers most often set a room level based on where the dialog “feels right.”

With the new spec, we sought to change how mixers measure, but not how teams mix. We measured our content which was compliant for the -24 LKFS +/- 2 LU full program spec, and found that dialog levels clustered around -27 LKFS. This approach, by using the dialog loudness, allows for as little workflow change as possible, while providing more dynamic range for creators that feel they need it. The choice of stating a 1770-1 measurement comes from the Dolby Media Meter terminology for a dialog based loudness measurement. Dialog based loudness measurement does not require the use of the relative-level gate specified in BS.1770-4, as the DI algorithm already applies a gate (a dialog-based gate) to the audio.

Mixers need not use the full dynamic range just because it’s available. We’ve found that titles with more limited dynamic range play best for Netflix members, so we published LRA guidance to assist teams in optimizing their sound mixes.

Thank you Scott for taking the time and being open and explaining where you are coming from in creating these new delivery specs. Scott has also provided link to the Netflix Spec and Best Practice documents as well as the article on Dolby’s website announcing Dolby’s Speech Gating Technology is now free to integrate with a no cost license.

As we welcomed Scott’s response please do share your thoughts and observations in the comments below.