Apple introduced the "Personalized Spatial Audio Profile" in iOS 16 and macOS 13, with support in the latest Logic Pro update 10.7.5. This could be a game changer whether you mix in Dolby Atmos or just listen to Dolby Atmos content.

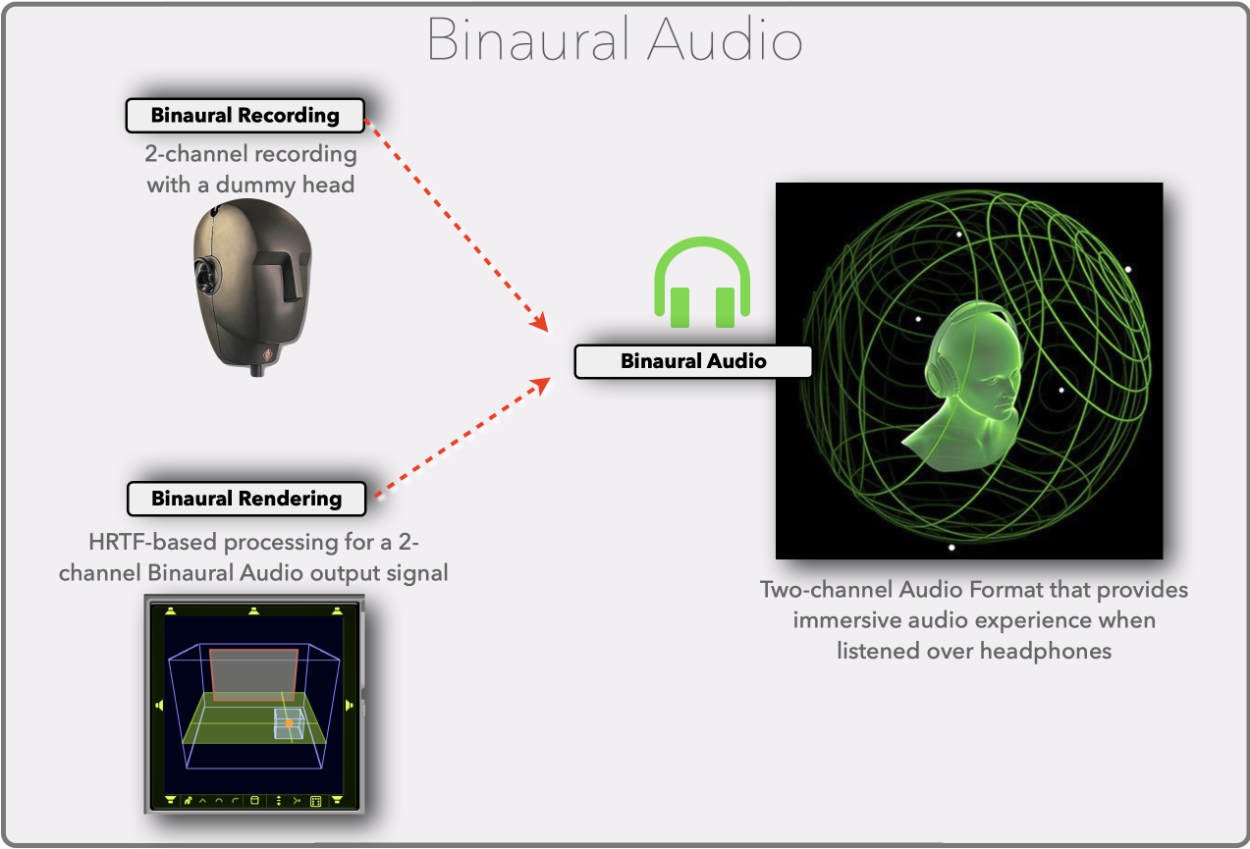

Binaural Audio

There are a few concepts we need to address for better understanding (and appreciating) what Personalized Spatial Audio is and what it does. The first one is "Binaural Audio". This would be a three-part series by itself, and I cannot stress enough that if you mix in Dolby Atmos or any immersive sound format, you need to "wrap your head around" that concept.

Here is a summary: "Binaural" or "Binaural Audio" refers to a two-channel audio signal that needs to be listen to over headphones. It provides the listening experience of hearing audio signals as if they would play around you in a three-dimensional sound field. In comparison, when listening to a standard two-channel stereo signal over headphones, you hear the signals more or less "inside" your head.

There are two ways to create a binaural audio signal:

• Binaural Recording: You use a dummy head that has two microphones built into the artificial ear, and you record these two channels like to a standard stereo mic recording.

• Binaural Rendering: You apply signal processing to any existing audio source to create the two- channel binaural signal.

Binaural audio has been around for ages, but with the growing popularity of immersive sound formats in recent years, like Dolby Atmos, it now plays an important role not only in audio production but also in music consumption. Thanks to the advancements in computer technology, which helped to improve Binaural Rendering algorithms, music and film content that was mixed on elaborate (and expensive) surround speakers can now be enjoyed by a broad audience just by listening on their (inexpensive) headphones or earbuds.

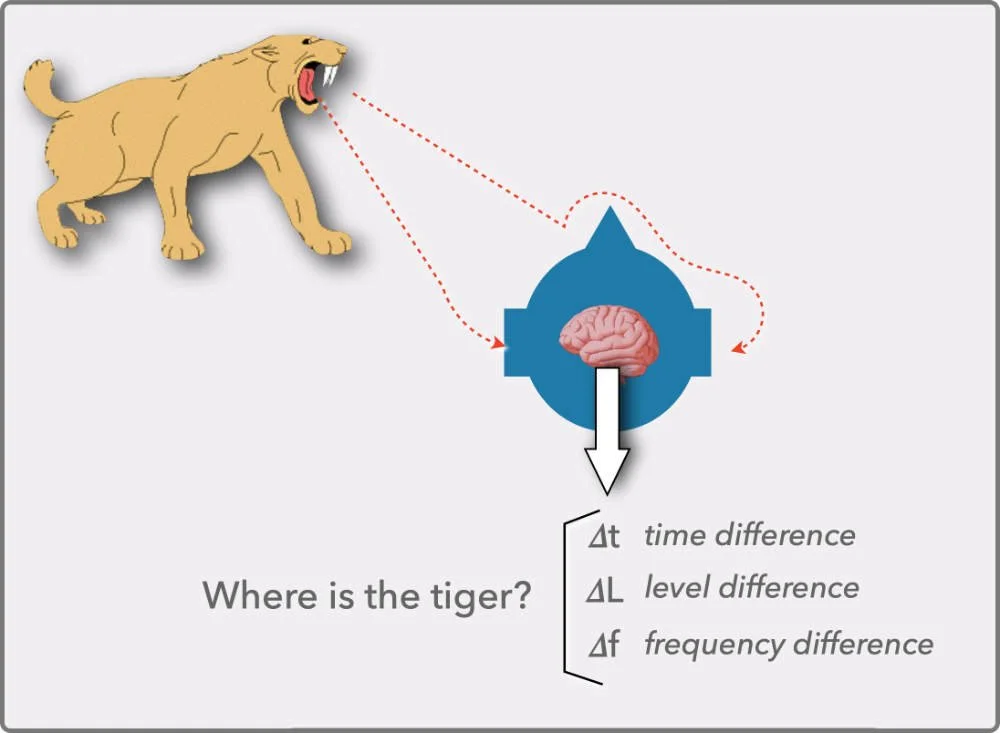

Where is the Tiger?

Here is a quick detour into psycho-acoustics to better understand the next term.

Our super-powerful brain is the reason why we can locate sound sources around us in a three-dimensional space with just two ears. It not only "hears" the sound that is entering our two ears, it analyzes how the two signals are different regarding to level, time (when they arrive), and frequencies. These differences are caused by the shape of our head, nose, ears, and shoulders, all the obstacles a sound signal encounters before reaching the ear drum.

Through life, the brain of each person trains itself to interpret those differences to "hear" signals coming from left, right, side, behind, above, and so on better . It is like a personalized "acoustic fingerprint" that is based on the individual shape of that person. Because these biometrics are slightly different for each person, that acoustic fingerprint is also somewhat different for each person.

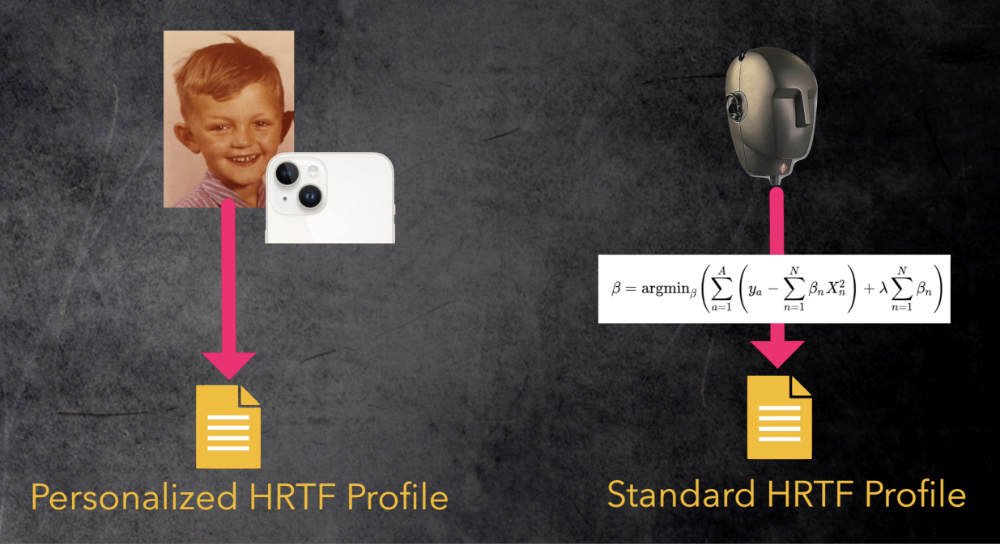

Head Related Transfer Function (HRTF)

Scientists developed a mathematical formula describing how all these obstacles around our heads change the sound before reaching the left and right eardrums. That formula is called the Head Related Transfer Function (HRTF).

Here is an example to demonstrate its use:

Let's assume you have a mono signal (a violin playing) and mix that to a two-channel output that you listen to over headphones. In this scenario, the sound of the violin is reaching both eardrums directly and is not altered by the shape of your head. Therefore, the brain doesn't detect any differences between the left and right ear to interpret the location. As a result, you "hear" the violin inside your head, the typical unnatural headphone listening phenomenon.

Now, you process the left and right output channels of that violin signal you feed to the headphones. You apply that HRTF formula, and by adjusting its parameters (think 3D panning), you are changing the left and right signal in such a way that you can trick the brain into thinking the violin is playing in front of you, behind you, or above you. The brain doesn't "know" that you have headphones on. It just recognizes differences that match the cues it has learned over a lifetime to tell you "I hear a violin playing, and based on how it sounds different on the left and right ear, I'm sure the violin is playing behind you to the left".

This process is called "Binaural Rendering". You process an audio signal to create a two-channel Binaural Audio signal that simulates a three-dimensional immersive sound experience.

Standard HRTF

The concept of Binaural Rendering makes it possible to deliver a Dolby Atmos mix's three-dimensional immersive sound experience to a mass audience that can't afford to decorate their living room with 12+ speakers. Instead, the consumer only needs a pair of headphones or earbuds, and you deliver a binaurally rendered version of the Dolby Atmos mix, experienced by millions of listeners with their music streaming services. However, some people say they experience the immersive sound, and others say they don't. The main reason for that is the HRTF.

When the engineers create their binaural renderer, they model their HRTF algorithm based on a specific shape of a head and ears, which is an average shape or a standard shape. So it is a Standard HRTF, one size fits all. If your shape is close to those biometrics, then the differences between the left and right channel (calculated by the renderer) will trick your brain perfectly in interpreting the location of the the sound it hears. However, if the shape of your head, ears, etc., is different, then your brain gets the wrong cues and, for example, all the instruments in a Dolby Atmos mix that should come from the sides or the back could appear from the front. The result is that the spatial placement of the instruments in the mix is different or nonexistent for those "non-standard" listeners, resulting in a poor immersive sound experience for them.

Personalized HRTF

The solution for the one-size-does-not-fit-all HRTF problem is to create a Personalized HRTF for each listener and use that data for the binaural renderer. That means when person X is listening to a binaural mix; for example, a violin is playing in the back to the right, the HRTF algorithm needs to change the two-channel binaural audio signal the same way. The sound would change if that violin is playing for real due the personal biometrics of person X. With the Personalized HRTF, the brain of person X would receive the same cues it is trained for and, therefore, makes person X think the violin is playing at that position, even though the signal is played through headphones.

I demonstrate that with some motion graphics in my video "Logic Pro update 10.7.5 - including Personalized Spatial Audio", posted on my YouTube channel "Music Tech Explained - the visual approach".

Personalized Spatial Audio Profile

So how do you get your Personalized HRTF? The best way to create a Personalized HRTF for person X is to conduct elaborate acoustic measurements in an anechoic chamber to create data that describes how the head and ears of person X affect the HRTF. Of course, this is not practical and somewhat cost-prohibitive. Over the recent years, however, some companies like Genelec and Sony provided solutions to make the creation of Personalized HRTFs more practical and affordable. Dolby started a beta program to create your own Personal HRTF that you can use with the renderer in the Dolby Atmos Renderer app. The AES (Audio Engineering Society) even created the SOFA standard (Spatially Oriented Format for Acoustics) to improve the exchange of the measure. However, it is still a rather fragmented playing field with many incompatible solutions.

Last month, Apple offered their own free solution to their customers called Personalized Spatial Audio. A user can create a video of their head using their iPhone, and based on that information, it creates a Personal HRTF, called a Personalized Spatial Audio Profile, that is used when playing back Dolby Atmos (or other spatial audio content) over supported Apple headphones.

Here are the steps and requirements:

You need an iPhone with a TrueDepth camera running iOS 16 (or later) with supported AirPods Pro or AirPods Max.

You put on your AirPods, go to the Settings app and tap the AirPods button on top.

On the next page, you tap the Personalized Spatial Audio button that guides you through the setup process.

During the three minute procedure, you take a video of the front, left, and right sides of your head while moving your head as directed.

Once completed, the iPhone creates the Personalized Spatial Audio Profile that is automatically enabled on that iPhone. Now when you listen to any Dolby Atmos mix on Apple Music (or any other spatial audio content), the binaural renderer uses that HRTF profile, optimized for your head measurements, and delivers the optimal immersive experience.

That Personalized Spatial Audio Profile is linked to your Apple ID (that you use on your iPhone) and is available to all other Apple devices that are signed in to that Apple ID. On your Mac, you need macOS 13 to use the profile.

You can't toggle the profile on/off. If you disable Personalized Spatial Audio (in the same settings), it will be deleted, and you will have to create it again.

You can find a list of all supported devices here.

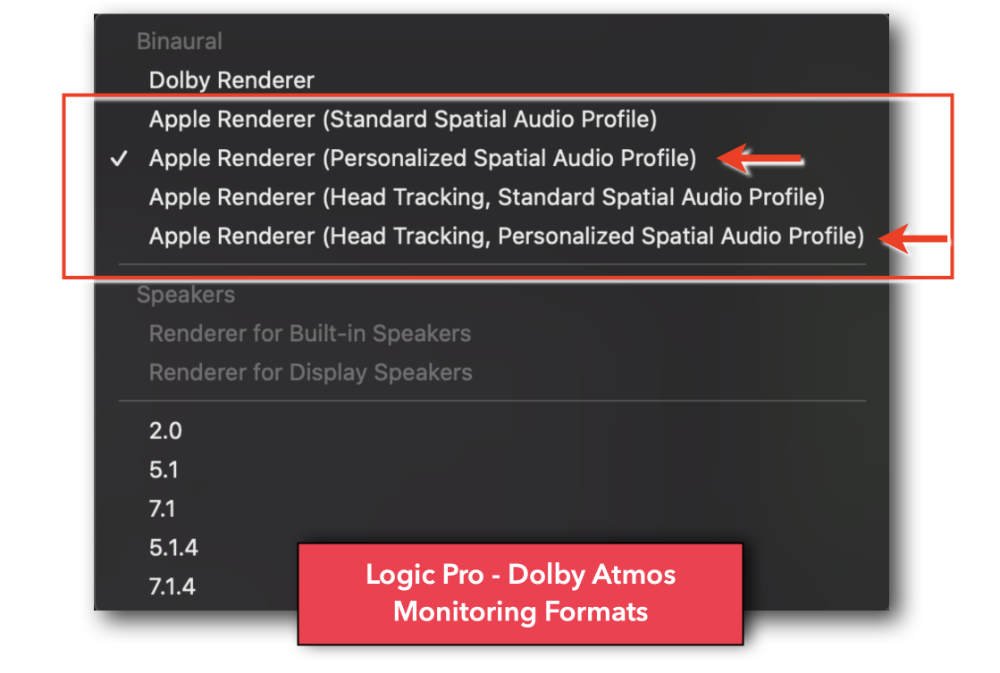

Implementation in Logic Pro

The Personalized Spatial Audio Profile not only helps to improve the individual listening experience when listening to Dolby Atmos content on Apple Music, it is also implemented in the latest Logic Pro update v10.7.5 so you can work on your Dolby Atmos mixes in Logic Pro using the Binaural Renderer with your Personalized Spatial Audio Profile. That means it provides a more precise monitoring enviroment when mixing with AirPods using the binaural renderer.

And remember, Logic Pro is the only DAW that allows you to monitor through Apple's Spatial Audio renderer is used on Apple Music (which sounds different than the Dolby Binaural Renderer.)

Here is a screenshot with the available monitoring formats in Logic Pro when mixing in Dolby Atmos. Both Apple Renderer options (with and without Head Tracking) are now available using the Standard Spatial Audio Profile or your Personalized Spatial Audio Profile. These Personalized Spatial Audio Profiles are automatically available when you use macOS 13, and you are signed in to the same AppleID that you used on your iPhone when creating the Personalized Spatial Audio Profile.

I explain all that in more detail in my book "Logic Pro - What's New in 10.7.5"

The option "Renderer for Display Speakers" is a cool feature if you have the new Apple Display. In that case, Logic Pro will playback your Dolby Atmos mix rendered to the six built-in speakers of that display using its spatial audio speaker virtualization.

Further Reading

I hope you find the information in this article useful. If you want to learn more about Dolby Atmos and Logic Pro, please check out the books in my Graphically Enhanced Manuals (GEM) series.

The books are available as pdf, iBook, Kindle, and printed books with all the links on my website. Use the button below.